Alongside COVID-19 as a viral pandemic, the World Health Organization was quick to declare COVID-19 an infodemic, a superabundance of online and offline information with the potential to undermine public health efforts. Here, Dr. Elinor Carmi, Dr. Myrto Aloumpi and Dr. Elena Musi discuss how philosophical fallacies can be instrumentalised in response to the COVID-19 infodemic and assist those coming into contact with fake news resist its rhetorical appeal.

– “What does it mean, though?” my mum asked in the group.

– “I saw on Facebook that people say that you can only invite 6 friends, but if they are from the same household then it can be more”, my brother chimed in.

– “But what about pubs? I’ve got Craig’s birthday on Saturday and I think he invited like 20 ppl or something”, my older sister always asks the important questions.

– ”omg I started a weird cough yesterday but it’s probably nothing. I think? Eeeek”.

– “LOCK HER UP! No but really you have to stay at home Elena”

– “Chill bro, I don’t have the symptoms”.

– “it’s maybe a good time to check your eyesight, maybe you should drive to Wales”.

– “haha… no but really I don’t have any fever or anything”.

– “yeah but I saw on Facebook yesterday Shelly’s brother has Covid and he didn’t have any fever or dry cough”.

– “You do realise there are other diseases, right?”.

I bet my family WhatsApp group is not unique. Whether you are still trying to catch up on all the information and guidelines around the Covid-19 pandemic, or feeling overwhelmed and trying to avoid it – information keeps on coming.

What we don’t always realise in our day to day communication, is that in all cases rhetoric is in action: from speech, image, audiovisual, and even in a way algorithmic ordering. Every time there is an attempt to persuade, we use rhetoric consciously or not.

The problem starts when mis-information (false information that is not created with intentions of causing harm) and dis-information (false information that is created with intentions of causing harm) swarm our media outlets. Mis- and dis-information create real harm to people’s lives and wellbeing, both physical and mental.

While fabricated news can be more dangerous, it is less likely to be trusted by the general public than potentially misleading news. And, given that 59% of fake news does not contain either fabricated or imposter content, but rather reconfigured misinformation, the impact of misinformation is huge. No wonder that human fact-checkers simply cannot cope with the fast spread of misinformation online, as they are struggling themselves to find criteria to identify misleading content.

Fallacies

Our proposition is simple: fake news – which can come as both dis- and mis-information – is news that seems valid, but is not, while fallacies are arguments that seem valid but are not. A famous example is Carneades’ snake and the rope: In a dark room something that gives the impression of being a rope, might just as easily be a snake, only by probing further, say by poking it with a stick, can either impression be considered plausible. Thus, if a news article contains fallacies, the likelihood that it is fake increases. If people are able to recognise fallacies in news, then they are likely to think twice before accepting the content without questioning it. In this way they can become their own fact-checkers.

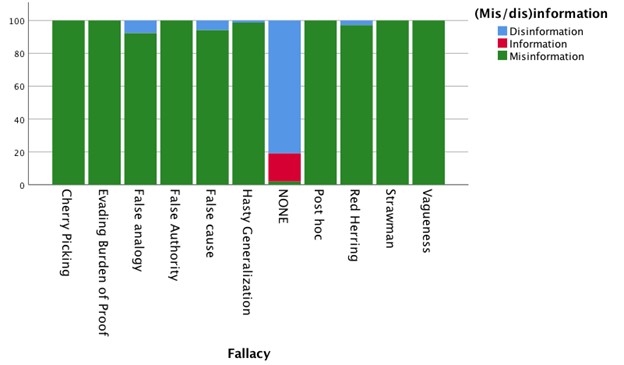

We have collected news about COVID-19 that have been fact-checked by the Ferret, Snopes, Health Feedback, Full Fact, and Politifact from the beginning of the pandemic to the end of June 2020, resulting in 1,135 claims. Out of these cases we found that 9.3% have been proven to be correct information, while 46.7% are misinformation, and 44.1% are disinformation. Through a multilevel analysis, we have discovered 10 fallacy types which strongly correlate with the grey area of misinformation, as displayed in the column graph.

Several fallacies will likely ring a bell to many, for example cherry-picking and false authority, but we have also summarised them in a here.

Very often social media posts or news articles can contain grains of truth, but as shown from our data sample, frequently these claims do not provide enough evidence (19.8%, evading the burden of proof); they make generalisations (14.5%, hasty generalization); they arbitrarily select evidence (20.0%, cherry picking); or they use vague and ambiguous terms (14.5%, vagueness).

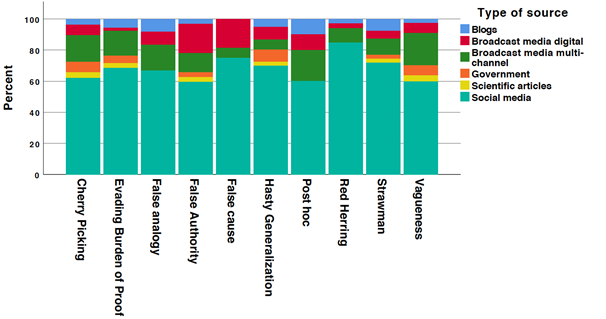

Even though all types of media sources distribute fallacious moves, social media seem to skyrocket across the fallacy board, as seen in our second column graph:

This is not surprising since affordances of social media facilitate quick sharing without verification, amplify sensationalised information and take things out of context to seed misinformation.

Let’s take a recent example. On Monday 9th November several media outlets published the Covid-related ‘breaking’ news that Pfizer’s “vaccine was more than 90 percent effective in preventing Covid-19”. However, as explained by Fullfact, “the trial isn’t yet complete, so this could change as the study goes on and more participants catch Covid-19”. Therefore, the news claim, though factually accurate, can lead people to mistakenly infer that a reliable vaccine has been found. To complicate matters, this type of news is rated differently by different fact checkers, with labels which are often uninformative.

This leaves people in the dark without really providing them with tools to understand for themselves what is wrong with a piece of news and how to identify it again in the future. That is why we believe that the rating process needs to be different. If we start by identifying the fallacies in mis- or disinformation news, it’s much easier to understand the kind of error or manipulation that is at play. Knowing that ‘cherry picking’ is a diversion fallacy, meaning that a cherry-picking story picks specific things and ignores others, is more constructive than knowing that the same story is labeled “Half True” or “Mostly False”. By identifying which fallacies are used each time, people will have tools to critically evaluate the different online manipulations they encounter.

Opening a dialogue

To put these insights into practice, we are working on two chatbots that will help both citizens and journalists to learn how to recognise and avoid fallacies in an engaging way. The chatbot is designed as an interactive game where you will be challenged and taught how to spot the ten fallacies in news by three philosophers. While we think that having more fact-checking organizations along with a reliable and trustworthy news media landscape is important, we also think that people should be able to learn how to identify online manipulations. This is about developing data thinking which involves critical understanding of the online environment.

Ultimately, we believe this should be part of a larger education programme and that citizens today should be enabled to develop data literacies, as we show in our report. Together with data doing (Citizens’ everyday engagements with data) and data participating (Citizens’ proactive engagement with data and their networks of literacy) this enables citizens to make more informed decisions that can help them navigate the online environment more safely individually as well as with their communities. You can already play with our chatbot here! We encourage you to either play by yourself, or to make it a whole family activity where you quiz each other. Learning together and helping each other to understand how news is shaped for different purposes can help us gain the skills needed for sifting truth from fiction and to collectively build a healthier, stronger and smarter democracy.

About the Authors

Elinor Carmi

Elinor Carmi is a Research Associate at Liverpool University, UK, working on several projects: 1) “Me and My Big Data – Developing Citizens’ Data Literacies” (Nuffield Foundation); 2) “Being Alone Together: Developing Fake News Immunity” (UKRI); 3) Digital inclusion with the UK’s Department for Digital, Culture, Media and Sport (DCMS). Dr. Carmi was invited by the World Health Organisation (WHO) as a scientific expert to be part of the closed discussions to establish the foundations of Infodemiology.

Elena Musi

Elena Musi is a Lecturer in Artificial Intelligence and Communication at the University of Liverpool. Elena’s research focuses on argumentation mining in the context of new technologies and their global impact, with particular focus on (mis)information and human-computer interaction: she is currently PI on UKRI ESRC project “Being Alone Together: Developing Fake News Immunity”.

Myrto Aloumpi

Myrto Aloumpi is a Classicist specialised in ancient Greek rhetoric and public speaking. She completed her DPhil at Oxford in 2018 and was Lecturer in Classics at Somerville College (Oxford) and at the University of Liverpool. She is currently Research Assistant in UKRI ESRC project “Being Alone Together: Developing Fake News Immunity”.