Key Takeaways:

- People consider creativity to be inherently human.

- But these AI models are beginning to have an impact in the real world, as seen with the prize-winning Midjourney picture.

- And generating fine art is different to producing digital designs.

- Both methods use the same underlying approach, known as a diffusion model computer program, which learns to create new images by looking at lots of existing images.

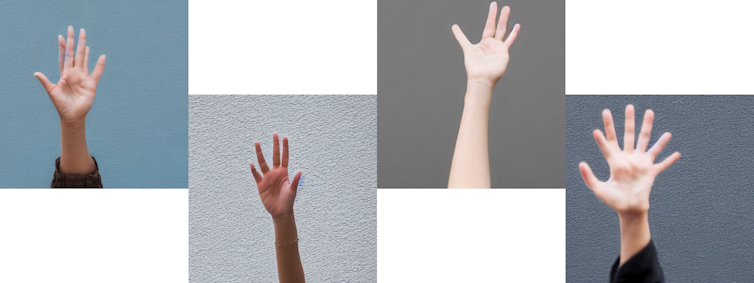

- AI art models still struggle to draw hands correctly.

People consider creativity to be inherently human. However, artificial intelligence (AI) has reached the stage where it can be creative as well.

A recent competition attracted anger from artists after it awarded a prize to an artwork created by an AI model known as Midjourney. And such software is now freely available thanks to the release of a similar model called Stable Diffusion, which is the most efficient of its kind to date.

Unions of creative practitioners such as Stop AI Stealing the Show have for some time been raising concerns about the use of AI in creative fields. But could AI actually replace human artists?

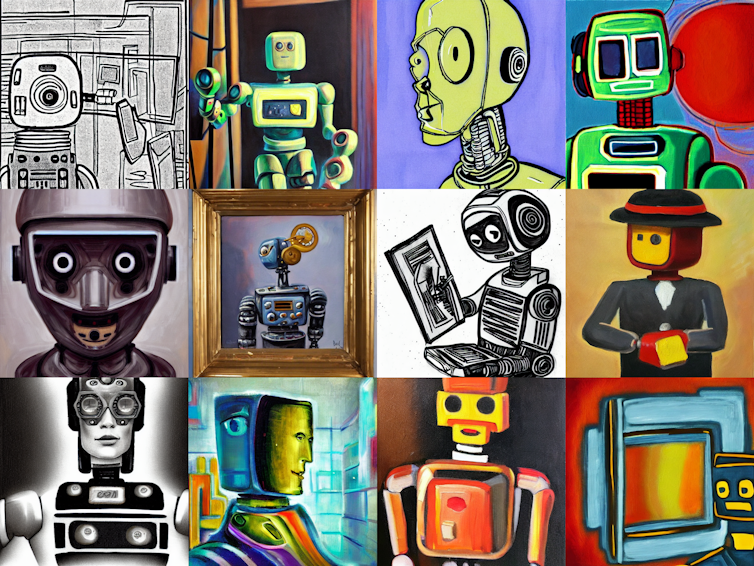

These new AI models can produce endless possibilities. Each image of the robots shown above are unique, yet are generated by Stable Diffusion from similar user requests.

There are two ways to use these AI artists: write a short text prompt, or provide an image alongside the prompt to give more guidance. From a 14-word prompt, I was able to generate several logo ideas for a made-up company that delivers fruit. In just under 20 minutes. On my mid-range laptop.

As you can see from the results above, Stable Diffusion struggles to create art involving words. And some of the fruit are a bit funky.

Yet there is no way I could have produced anything remotely like this without using AI or employing the help of a graphic designer. I couldn’t have created the robot pictures myself either.

The potential of this technology hasn’t gone unnoticed – the startup responsible for Stable Diffusion, Stability AI, is targeting a US$1 billion (£900 million) investment evaluation. But these AI models are beginning to have an impact in the real world, as seen with the prize-winning Midjourney picture. Indeed where AI really excels is producing pieces of fine art that combine different elements and styles.

Yet while AI may do most of the legwork for you, using these models still requires skill. Sometimes a prompt doesn’t generate quite the image that you wanted. Or the AI can be used alongside other tools, only making up a small part of a larger pipeline.

And generating fine art is different to producing digital designs. Stable Diffusion is better at drawing landscapes than logos.

Why Stable Diffusion is a game changer

AI models are typically trained to create art using a dataset containing a staggering 5.85 billion images. This vast amount of data is needed so the AI can learn about image content and artistic concepts. And it takes a very long time to process.

For Stable Diffusion, it took 150,000 hours (just over 17 years) of processor time. However, this can reduced to less than a month of real time by training in parallel on large compute clusters(collections of powerful computers that act a single device).

Stability AI also provides an online tool called DreamStudio that allows you to use its AI model at a cost of around US$0.01 per image. In comparison, to use competitor OpenAI’s art model, DALL·E 2, the cost is over ten times that.

Both methods use the same underlying approach, known as a diffusion model computer program, which learns to create new images by looking at lots of existing images. However, Stable Diffusion has a lower computational cost, meaning it requires less time to train, and uses less energy.

Plus, you can’t actually download and run OpenAI’s model yourself, only interact with it via a website. Stable Diffusion, meanwhile, is an open-source project that anyone can play around with. So it enjoys the benefit of rapid development by the online coding community, such as improvements to the models, user-guides, integration with other tools. This has already been happening in the weeks after Stable Diffusion’s release in August 2022.

The future of art?

While vast improvements have been made in the last five years, there are still things that AI art models struggle with. Words in their artworks are recognisable but often gibberish. Similarly, AI struggles to render human hands.

There’s also the obvious constraint that these models can only produce digital art. They can’t work with oils or pastels like people can. In the way that vinyl has made a comeback, technology may initially create a swing towards a new form, but over time people always seem to circle back to the original form with the highest quality.

Ultimately, as previous research has found, AI models in their current form are more likely to act as new tools for artists than as digital replacements for creative humans. For example, the AI could generate a range of images to serve as a starting point, which can then be selected from and improved by a human artist.

This combines the strengths of AI art models (rapid iteration and creation of images) with the strengths of human artists (a vision for the piece of art and overcoming the problems with AI models). This is especially true in the case of commissioned art when a specific output is needed. AI on its own is unlikely to produce what you need.

However, there is still a danger for creatives. Digital artists who choose not to use AI may be left behind, unable to keep up with the rapid iteration and lower costs of AI-enhanced artists.